By Nick Griffin

The dramatic Pentagon shift from Anthropic’s “Claude” to OpenAI, developed by Sam Altman, has been overshadowed by Donald Trump’s own pivot from his “make America great again” rhetoric to “make Israel great again” reality, but the abrupt switch in providers for the super-brain supposedly being developed for America’s military has still caused excitement in the industry and among investors. Anthropic describes itself as is an “artificial intelligence (AI) safety and research company” working to “safely and responsibly integrate AI into the daily lives of Americans.

Click the Link Below to Listen to the Audio of this Article

Some saw the move as surprising, given that Claude provided the AI brains behind Trump’s technically perfect Venezuela coup. Others have taken heart from the fact that the president’s stated reason for dropping Anthropic AI system was that the company was run by “leftwing nut jobs.”

Defense Secretary Pete Hegseth jumped in, labeling Anthropic a “supply-chain risk to national security after Anthropic CEO, Dario Amodei refused to drop the limited safeguards against computer recklessness or government abuse.

Anthropic’s built-in restrictions prohibited its use for fully autonomous weapons or mass domestic surveillance of Americans. Amodei highlighted hypothetical scenarios like nuclear strikes where, unchecked, AI could escalate conflicts disastrously. These were red lines rooted in the company’s stated commitment to responsible AI.

The Pentagon viewed these safeguards as an unacceptable intrusion into military command, demanding “all lawful uses.” This is an extremely wide category under a Caesar seemingly hell-bent on ditching all the checks and balances which the founding fathers put into the Constitution. The spat ended with Anthropic being blacklisted, effectively barring it from future deals with the U.S. government and putting all the Pentagon’s AI eggs in Altman’s basket.

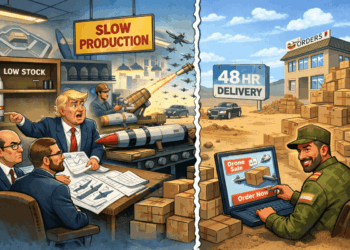

The rush to put AI at the heart of America’s military has really taken off since the start of this year. The Department of Defense—rebranded as the Department of War—has escalated its efforts to embed AI across every facet of military operations, from battlefield intelligence to cyber defense and autonomous systems.

Officially, this is driven by a fear of falling behind adversaries like China in what officials term an “AI arms race.” It isn’t merely about efficiency; it’s about achieving “AI overmatch,” where algorithms process vast data streams faster than human minds, closing kill chains in seconds and enabling decisions that outpace enemies and pierce the “fog of war.”

As always with matters involving the Military-Industrial Complex, however, it is also clear that the vast sums of money involved provide a massive incentive for rose-tinted analysis of bold claims for the latest technology. The new alliance between Big Tech and the Military-

Industrial Complex can be viewed by cynical observers as less like innovation and more like a reckless gamble based on greed and the bottom line of corporations who have procurement bosses and lawmakers in their pockets.

A staggering $13.4 billion for AI is listed in the 2026 budget alone, a sevenfold increase from last year. This is being poured into unproven technologies which will automate and revolutionize warfare, but also amplify surveillance, erode human oversight, empower computers that place no value on human lives, and enrich private contractors under the guise of national security.

With such enormous sums involved, it should come as no surprise that Claude AI and OpenAI share many of the same investors. At least a dozen venture firms, including Sequoia Capital, Founders Fund, Iconiq, and Insight Partners, have backed both companies. BlackRock affiliates invest in Anthropic despite board ties to OpenAI.

Despite the overlap, there is a broad division between the two operations. Their rivalry traces back to a foundational schism in 2020, when key OpenAI figures, including the Amodei family, departed amid profound disagreements over the pace of AI development, safety protocols, and the influence of commercialization, particularly Microsoft’s multibillion-dollar stake that critics argue tilted OpenAI toward profit-driven acceleration over rigorous risk mitigation.

This split birthed Anthropic as a deliberate counterpoint, emphasizing “alignment research” to ensure AI systems adhere to human values. This is reflected in Claude’s “constitutional AI” framework, which embeds principles like harmlessness and helpfulness directly into model training. By contrast, Altman’s OpenAI embodies an “accelerationist” ethos, a hell-for-leather dash for AI dominance, regardless of the potential risks.

Anthropic has actively lobbied for stringent regulations, donating $20 million in early 2026 to Public First Action, a super-PAC supporting state-level AI oversight to counter existential risks from superintelligent systems. OpenAI favors rival lobby group Leading the Future, which has amassed $125 million to advocate for minimal restrictions, framing safeguards as stifling innovation and economic growth. This clash erupted publicly during the 2026 Super Bowl, where Anthropic’s ads attacked OpenAI’s “reckless” speed, prompting rebuttals from OpenAI President Greg Brockman.

It is worth noting that OpenAI’s head of robotics, Caitlin Kalinowski, resigned immediately after Altman’s victory, citing ethical concerns over “lethal autonomy without human authorization” and unchecked surveillance.

If this, combined with the presence of Altman at the head of Open AI, inclines you to prefer Anthropic, remember that both operations use arch-Zionist Peter Thiel’s Palantir to handles their data integration, security permissions, and workflows. Whoever supplies the AI model, Palantir builds the systems that allow the government and defense agencies to apply such models to real-world data and surveillance operations.

Despite the broad Microsoft-OpenAI versus Amazon-Google-Anthropic “split,” both are part of the same globalist corporate hydra. Despite natural concerns about the future impact of its technology, it can be argued that the United States has no choice. China is investing heavily in similar capabilities. Failure to integrate AI rapidly could leave U.S. forces at a strategic disadvantage.

America’s military had to throw itself into the AI arms race, but it’s a sort of Faustian bargain. Trump and Hegseth had a choice of two devils, and handed America’s dark military-industrial soul over to the one who is somewhat more “accelerationist” than the other.

From the standpoint of critics who distrust both Big Tech and the national security apparatus, the episode illustrates a familiar dynamic. A technology company attempts to impose ethical limits; those limits collide with the strategic ambitions of the state; another company steps in willing to be more accommodating; and the technology gets embedded in systems of surveillance, intelligence and warfare.

In this sense the conflict over Claude and the Pentagon’s subsequent embrace of OpenAI is a preview of future struggles. As artificial intelligence becomes more powerful, governments will increasingly seek to harness it. Technology firms will compete for those partnerships while attempting to maintain public trust by promising safeguards. The tension between commercial ambition, ethical restraint, and military and government demands will likely intensify.

The bottom line, however, is this: Trump’s Department of War is committed to ever more aggressive foreign adventures, Big Tech is going to make ever bigger profits from helping it happen, and your taxes—or a mountain of debt piled on your grandchildren’s shoulders—are going to pay for it all.

Unless some AI devil in the war machine decides that a nuclear war would provide the “final solution” to the “human problem.”

Nick Griffin is a British nationalist commentator and writer. He was chairman of the British National Party (BNP) from 1999 to 2014, and a Member of the European Parliament for North West England from 2009 to 2014. Since then, Griffin has remained active in British politics despite being vilified for criticizing rampant immigration. You can read his work on Substack at “Nick Griffin Beyond the Pale” and on Telegram t.me/NickGriffin.

(function() {

var zergnet = document.createElement(‘script’);

zergnet.type=”text/javascript”; zergnet.async = true;

zergnet.src = (document.location.protocol == “https:” ? “https:” : “http:”) + ‘//www.zergnet.com/zerg.js?id=88892’;

var znscr = document.getElementsByTagName(‘script’)[0];

znscr.parentNode.insertBefore(zergnet, znscr);

})();